Settle a bet for me.

Is this interview with Keanu Reeves talking about deepfakes a deepfake?

Hear me out on this!

Keanu Reeves is a favorite in the deepfake community.

You can’t unsee him as Forrest Gump. (Don’t say I didn’t warn you.)

In 2019, “he” went viral for stopping a robbery.

So this would make for pretty epic marketing, not only for The Matrix Awakens game demo he’s promoting, where an artificial, but uncanny Keanu asks “How do we know what is real?”, but also for the tech undergirding the game.

“Synthetic media” is the feel-good term for what we uneasily refer to as “deepfakes.”

In this campaign, Reeves isn’t just selling us the game or the movie, he’s selling us on synthetic media.

Much of the coverage of this interview focuses on Reeves laughing at NFTs like Mr. Magoo and his apparent glee at the idea of his likeness being used for metaverse pornography. (Carrie-Ann Moss, on the other hand, says leave me out of it.)

But Unreal Engine, the company behind The Matrix Awakens, doesn’t just want to make video games. It wants to build the metaverse.

When I look at it like a marketer — a sickness of mine — I can’t ignore how, when a giggling Keanu Reeves presents this technology to us, it trivializes and distracts from the greater issues at play.

It's a brilliant marking move on Unreal Engine’s part:

Take the guy synonymous with navigating the tension between reality and illusion and make him your brand ambassador.

Keanu’s character in The Matrix saves the world from unreality, so his collaboration with Unreal Engine lends credibility to a technology the public feels ambivalent about.

“Neo” wouldn’t cosign synthetic media if he thought it could be a problem, right?

Debuting this tech in tandem with the new Matrix Resurrections movies coming out later this month makes it seem like this technology will only affect our day to day lives in some far-off dystopian future, but the truth is:

The future is here.

And it’s coming to a funnel near you.

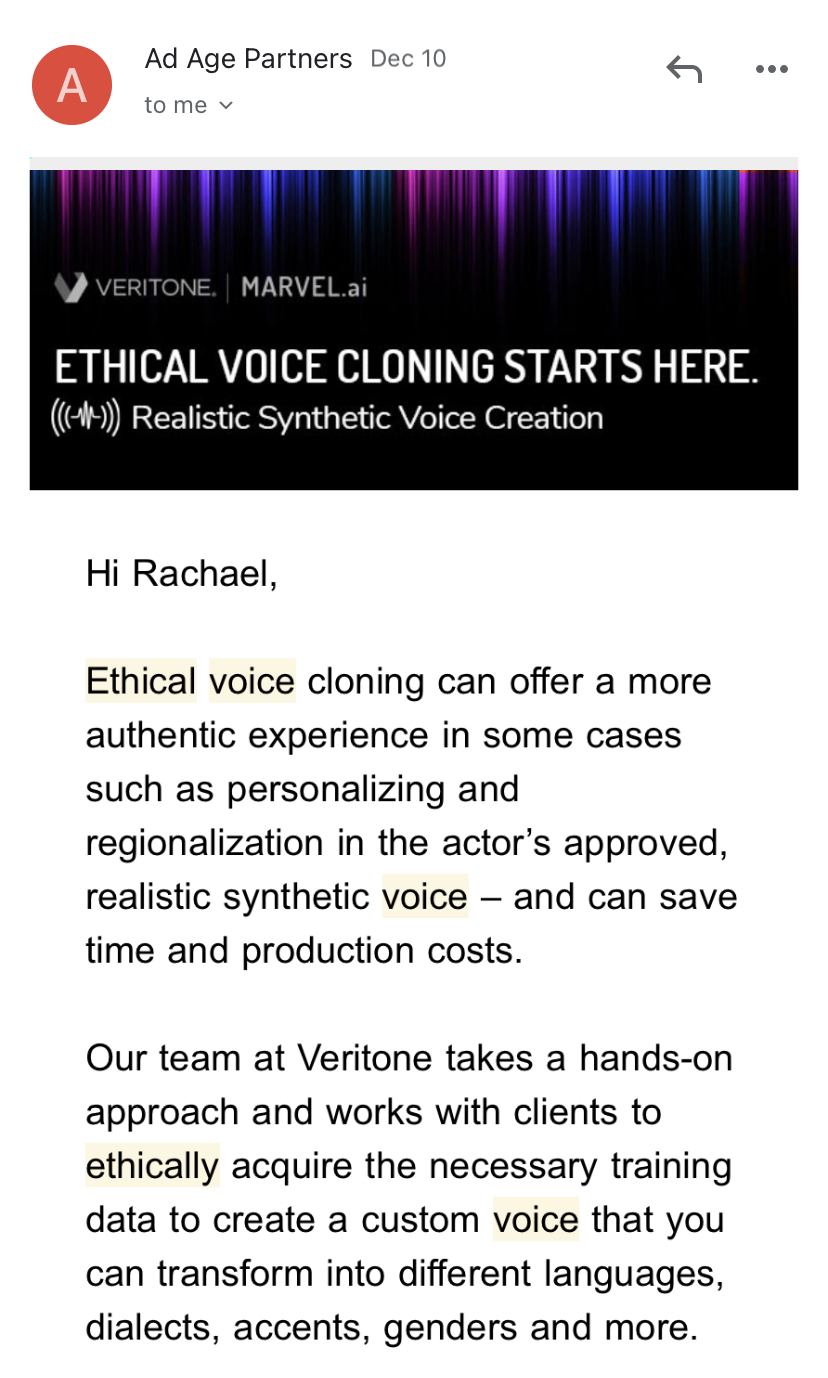

Last week, I received an email campaign asking me if I was ready to leverage the power of “ethical” voice cloning.

The email urged me to download a white paper on “The Truth About Deepfakes, Synthetic Voice, and Voice Cloning.”

There is no pitch and no easy way for me to purchase. The company clearly doesn’t want me to buy anything. Yet. In fact, I bet they don’t care if I ever buy their product at all.

Like Unreal Engine, they just want my buy-in to the concept.

This email is preempting my future pain points and objections around this tech.

As synthetic media becomes more mainstream, inevitably opening up so many cans of worms we’ll have to learn how to clone fish, its makers have already been diligently branding it and shaping the conversation before it turns into an uproar.

Nina Schick, author of Deepfakes: The Coming Infopocalypse, forecasts that at the rate this technology is moving, by the year 2030, over 90% of media could be synthetic.

“HOW THE HELL?!” you may ask. (Just me?)

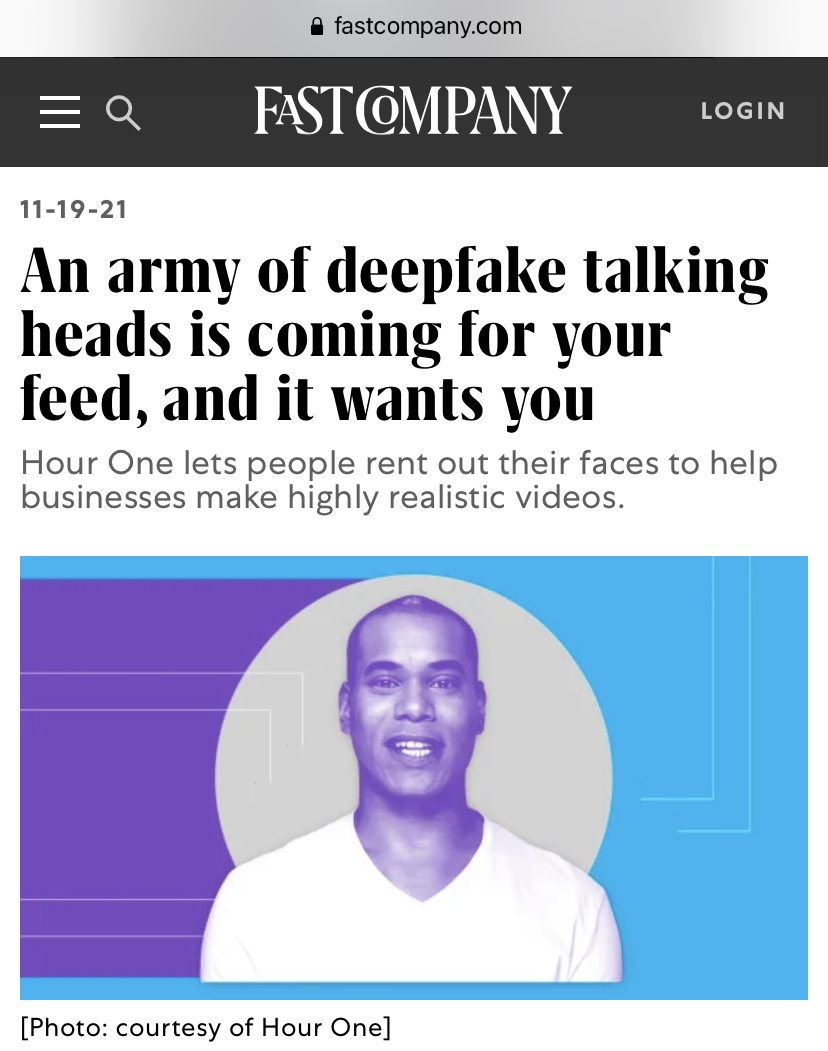

I think the answer may lie in The Matrix...and this Fast Company article by Alex Pasternack, featuring one of many startups bringing artificial media to businesses of all sizes: “An Army of Deepfake Talking Heads Is Coming For Your Feed, and It Wants You."

Hour One is “the world's leading trusted provider of synthetic characters based on real-life people.”

Trusted? By whom, exactly?

This copy is more like a wish than anything else, one their marketing team is hoping will come true if they can position this technology in just the right light, using PR like this article to preempt our concerns.

“It’s not replacing any personal connection,” Hour One cofounder Lior Hakim tells Fast Company.

“But [real-world interactions] are not scalable, just by the fact that they’re bound by time and the physical aspect of, well, us.”

Hakim paints a picture of a “character economy” where we could all eventually become synthetic versions of ourselves.

In this way, synthetic media is being sold as the answer to our capitalist prayers:

“Dear God, if only I could clone myself! Then I could finally get everything done!”

The fact is, Unreal Engine doesn’t have to sell this synthetic tech too hard — their audience is made up of early adopters, who are already in the palm of their hand.

Synthetic media will be made viable, not by enthusiastic gamers, but hungry entrepreneurs (and the fat cats they aspire to be).

Look at the history of technology and capitalism and you’ll see…the easiest way for new tech to go unchecked?

Convince businesses they can’t exist without it.

Reader, you are the ideal client.

For maximum effect, I encourage you to re-read the above sentence to yourself as Maury Povich.

In the context of capitalism, where “scaling” is the ultimate goal, companies like Hour One would have us skip right over the ethical minefield that is “the character economy,” and focus on how we can finally do the impossible.

Scale our selves.

The value proposition behind synthetic media is that human connection sells more, but simply isn’t scalable.

Our humanity is getting in the way of our profitability.

Why go through the time and hassle of employing live people to connect with your customers when a robot or “Real” in this case, will do the trick…without unionizing midway through?!

Diversity? Equity?! Inclusion?!?! Sounds like people problems.

Nip those pesky personnel pain points right in the bud, before you can say, “Let’s get that paperwork started!” (Because there won’t be any.)

Our modern brand culture owes its existence to this argument.

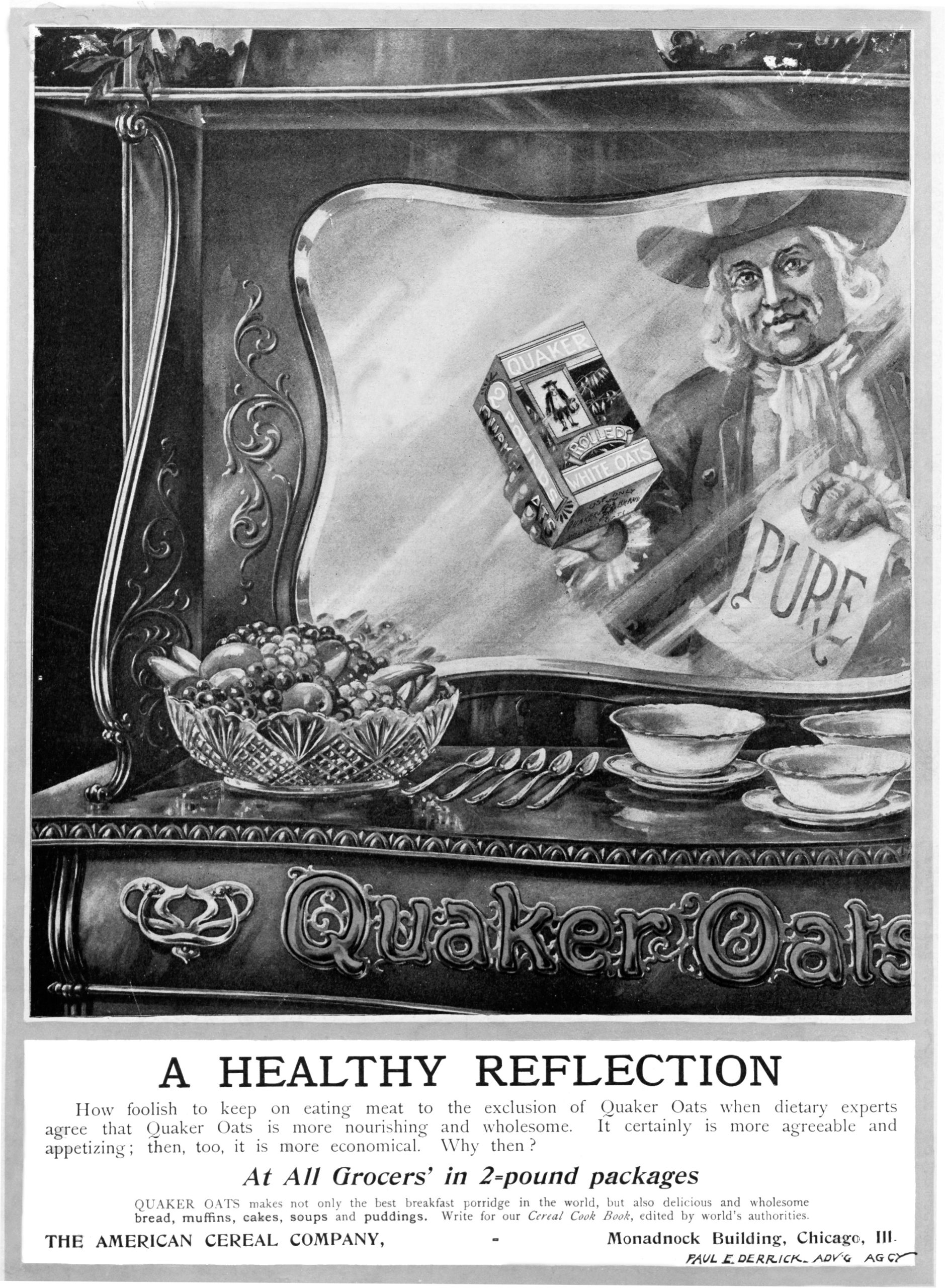

200 years ago, brands were born to replace the friendly shopkeeper you knew by name, scooping your various & sundry items from unmarked barrels at the general store.

We were naturally uneasy buying from unknown companies.

But if we didn’t get comfy — and quick — the Gilded Age couldn’t reach its full glory.

All this fancy new technology, pumping pollution into our newly colonized skies and driving thousands of poorly paid workers to early graves, needed to produce profits.

People buy from people — but you can’t scale a shopkeeper — so marketers invented brands to build a sense of familiarity and relationship between consumers and companies, and they put people on the labels instead.

Enter Larry, the Quaker Oats man, and his brand name friends that would soon follow.

“Quaker” was chosen as a brand name, with Larry the human mascot in traditional “Quaker garb” because the American public associated the word with the “purity” and “honesty” of the Quaker religion.

Ironically — or not-so-ironically, depending on how you look at it — none of the Quaker Oats founders were actual Quakers.

Over the course of the 20th century, marketers learned that the more they could create brand experiences that felt like human experiences — creating whole emotional worlds, cultures, communities around their products — the less it mattered what they were selling.

If you can make someone feel, you can make someone buy.

And marketers discovered that the easiest way to appeal to our emotions is through human connection.

The “human” part, in this context, is simply a means to an inhuman end.

Like I wrote in last week’s post on The Age of the Personal Brand:

Marketers have spent almost 200 years trying to make brands that feel like people.

Now, marketers will spend the next 200 years trying to make people feel like brands.

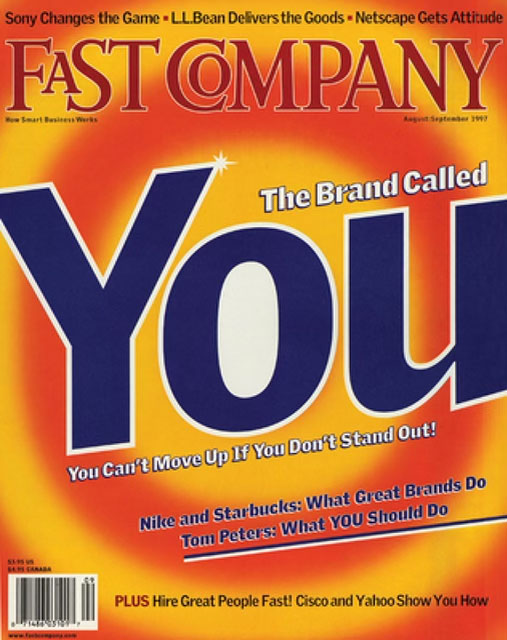

“You’re every bit as much a brand as Nike, Coke, Pepsi, or the Body Shop,” Tom Peters wrote when he sold us the potential of personal branding in 1997, also in Fast Company.

But synthetic media turns Peters’s fairy tale of self-branding as empowerment into an episode of Black Mirror.

The Matrix came out two years after the term “personal brand” was coined and it captivated us precisely because of the horror of a dystopian future where the lines between “real” and “artificial” collapse.

Where humans are rendered mere power sources for the very system that robbed them of their humanity.

Sound familiar?

Neo came to save us from exactly what (deepfake) Keanu is giggling about in his Verge interview.

The illusion that we are free to be you and me, when in reality, we are serving a system that doesn’t care about us.

Synthetic media speaks to a pain point that only exists in the fiction of this system.

Human interaction isn’t scalable because humans don’t need it to be.

Capitalism does.

Reeves is a red (pill) herring.

He and his personal brand are here to distract us, even soothe us into a false sense of security, with his participation in the conversation around synthetic media.

It’s a masterful marketing move on behalf of the makers of the metaverse.

A plot twist we all should have seen coming a mile away.

In this matrix, Neo doesn’t save us. He sells us out.

p.s. Sick of business newsletters that have all The Answers™? Well, I've got nothing but questions. For more marketing muckraking and brand strategy gone wild, sign up for my emails here:

If you liked this, read on:

In many ways, it seems easier to become a “personal brand” version of yourself than to be yourself. Brands are built on simplicity. A “good”…

Read More...Rebrand TOO MUCH to SO MUCH. Instead of saying, “She’s TOO MUCH,” say, “She’s SO MUCH.” You’re welcome. Lessons on burning it all down…

Read More...In 2021, I started a business / art experiment called FREE SCHOOL. I didn’t know at the time that this school would teach me to free myself. What happens after you burn it all down?

Read More...